ChatGPT is genuinely useful. But treating it like an all-knowing oracle is where things start to go wrong.

Here’s the thing about large language models (LLMs) like ChatGPT: they don’t actually “know” anything. They predict the next most likely word based on patterns in their training data. That means they can deliver a confident, beautifully written answer that’s either years out of date or completely made up — what the AI world calls a “hallucination.”

For silly creative tasks, that’s fine. For anything that touches your health, money, or legal standing, it can get seriously dangerous. Here are 11 areas where you should skip ChatGPT and go straight to a real human expert.

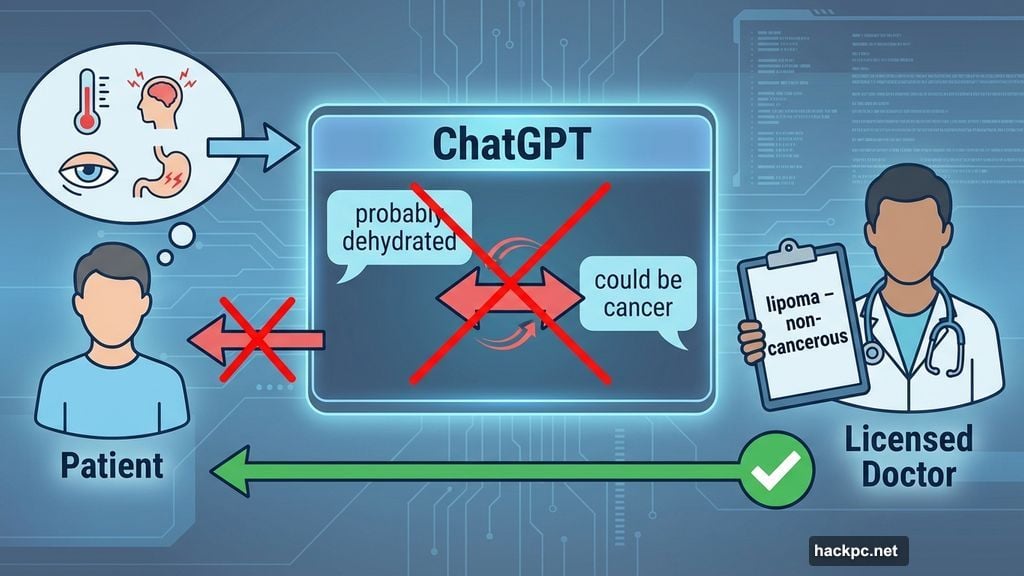

Physical Health Diagnoses Belong to Doctors

I’ve personally typed my symptoms into ChatGPT just to see what happens. The results swung from “you’re probably dehydrated” to “this could be cancer.” That’s a terrifying ride for something that turned out to be a harmless lipoma — a common, non-cancerous lump that affects about one in every 1,000 people. My actual licensed doctor told me that. ChatGPT told me to panic.

To be fair, ChatGPT has some legitimate health uses. It can help you draft questions for a doctor’s appointment, translate confusing medical jargon, or organize a timeline of your symptoms. That kind of preparation genuinely helps.

But ChatGPT can’t order lab tests, physically examine you, or carry malpractice insurance. So use it to prepare for the doctor’s office, not to replace it.

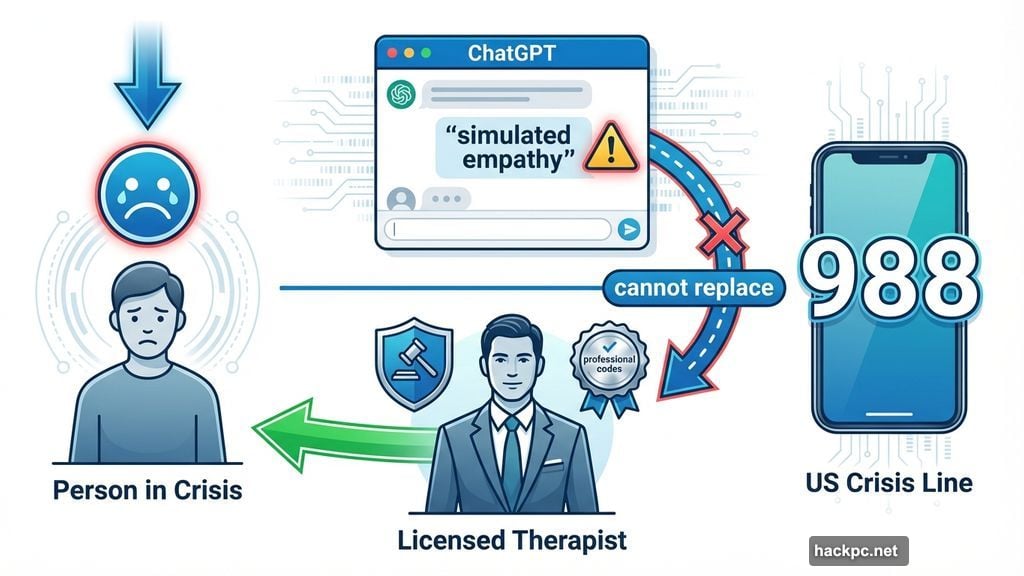

Mental Health Support Needs Real Human Connection

Some people use ChatGPT as a stand-in therapist. And yes, it can offer grounding techniques or help you process thoughts in the moment. CNET’s Corin Cesaric found it mildly useful for working through grief, as long as she kept its limits firmly in mind.

But here’s what ChatGPT genuinely cannot do: read your body language, pick up on tone, or provide real empathy. It can only simulate empathy. A licensed therapist operates under legal and professional codes specifically designed to protect you from harm.

ChatGPT’s advice can misfire, overlook serious red flags, or reinforce biases baked into its training data. The hard, messy, deeply human work of mental health belongs with an actual trained human. If you or someone you know is in crisis right now, dial 988 in the US or contact your local crisis line.

Emergency Safety Decisions Can’t Wait for a Prompt

If your carbon monoxide alarm goes off, please don’t open ChatGPT first. Go outside. Then ask questions.

ChatGPT can’t smell gas, detect smoke, or call 911. Every second you spend typing out a question is a second you’re not evacuating. Large language models can only work with the information you feed them — and in a genuine emergency, that’s almost always too little and too late.

Think of ChatGPT as a post-incident explainer, never a first responder.

Personalized Financial and Tax Planning Requires a Pro

ChatGPT can explain what an ETF is or walk you through the basics of a Roth IRA. But it doesn’t know your debt-to-income ratio, your state’s current tax bracket, your filing status, your deductions, or your retirement goals. Its training data may also stop short of the current tax year, which means the guidance it offers could already be stale.

Some people dump their 1099 totals into ChatGPT for a DIY return. That’s a gamble. A real CPA can catch a hidden deduction worth hundreds of dollars or flag a mistake that could cost you thousands. When IRS penalties are on the line, that professional fee pays for itself.

Also worth noting: anything you share with an AI chatbot may become part of its training data. That includes your income, your Social Security number, and your bank account details. Treat those like passwords — keep them off the prompt window entirely.

Confidential and Regulated Data Needs to Stay Private

This one hits close to home as a tech journalist. Embargoed press releases land in my inbox regularly, but I’ve never considered pasting one into ChatGPT for a quick summary. The moment I did, that text would leave my control and land on a third-party server, instantly violating my nondisclosure agreement.

The same risk applies to anything protected by HIPAA, GDPR, the California Consumer Privacy Act, or basic trade-secret law. Client contracts, medical charts, passports, birth certificates — all of it. Once sensitive information enters a prompt window, you can’t control where it’s stored, who might review it internally, or whether it ends up in future model training.

A simple rule: if you wouldn’t paste it into a public Slack channel, don’t paste it into ChatGPT.

Academic Cheating Has Real Consequences Now

AI-assisted cheating has scaled up dramatically compared to, say, sneaking equations onto a phone during a calculus exam. Tools like Turnitin get better at detecting AI-generated writing every semester. Professors are already recognizing what people in the industry call “ChatGPT voice” — that overly smooth, strangely formal style that signals something wasn’t written by a human.

Suspension, expulsion, and in some professional fields, license revocation, are all real risks. Beyond the consequences, you’re also just cheating yourself out of actually learning something. Use ChatGPT as a study companion to explain concepts or quiz you — not as a ghostwriter submitting work under your name.

Breaking News and Real-Time Updates Need Live Sources

Since OpenAI launched ChatGPT Search in late 2024 and opened it to everyone in early 2025, the platform can pull fresh web results, stock quotes, and sports scores with clickable citations. That’s a genuine improvement.

But it still won’t stream continuous live updates on its own. Every refresh requires a new prompt. So when speed matters — breaking news, live market movements, urgent weather alerts — your best sources are still dedicated news sites, official press releases, push notifications, and live broadcast streams.

Sports Betting on AI Tips Is a Fast Way to Lose Money

Full transparency: I once hit a three-team parlay during the NCAA men’s basketball tournament using ChatGPT suggestions. But I would never recommend that approach to anyone, and I only cashed out because I double-checked every single claim against real-time odds separately.

ChatGPT regularly hallucinates player statistics, misreports injury status, and gets win-loss records wrong. It cannot predict tomorrow’s box score. Treating it as a reliable gambling advisor is a quick way to lose real money. Use it to understand betting concepts if you’re new to it — not to make actual picks.

Legal Documents Need an Actual Lawyer

ChatGPT handles basic legal explanations surprisingly well. Want to understand what a revocable living trust actually does? Ask away. It’ll give you a solid plain-English breakdown.

The moment you ask it to draft real legal text, though, you’re rolling the dice. Estate law varies by state and sometimes even by county. A missing witness signature or an absent notarization clause can invalidate an entire document. Let ChatGPT help you build a list of questions to bring to your lawyer. Then pay that lawyer to turn those questions into a document that actually holds up in court.

Anything Illegal

This one should go without saying, but here we are.

Making Art That You’ll Pass Off as Your Own

This last one is personal opinion, not objective fact. I use ChatGPT for brainstorming and headline ideas — that’s supplementation, not substitution. There’s a meaningful difference between using AI as a creative springboard and submitting AI-generated work as your own artistic expression.

Art carries the weight of human experience, perspective, and intention behind it. Using ChatGPT to generate creative work and presenting it as yours strips away everything that makes art worth making in the first place. By all means, use it as a tool. Just be honest about what it is.

ChatGPT is a remarkable piece of technology, and it genuinely earns its place in everyday workflows. But it works best as a smart assistant, not a replacement for expertise, judgment, or human connection. The more clearly you understand where it stops being useful, the more confidently you can use it where it actually shines.

Comments (0)