AI is generating more code than ever. And now, a lot of that code is showing up with bugs nobody fully understands.

Anthropic just launched a new tool called Code Review, built directly into Claude Code. The goal is simple: catch problems before they reach your codebase. And given how fast AI-generated code is piling up, the timing makes a lot of sense.

“Vibe Coding” Created a New Kind of Bottleneck

There’s a style of development spreading fast through engineering teams right now. Developers describe what they want in plain language, and AI tools generate large chunks of working code almost instantly. People call it “vibe coding,” and it has genuinely sped up how fast teams ship features.

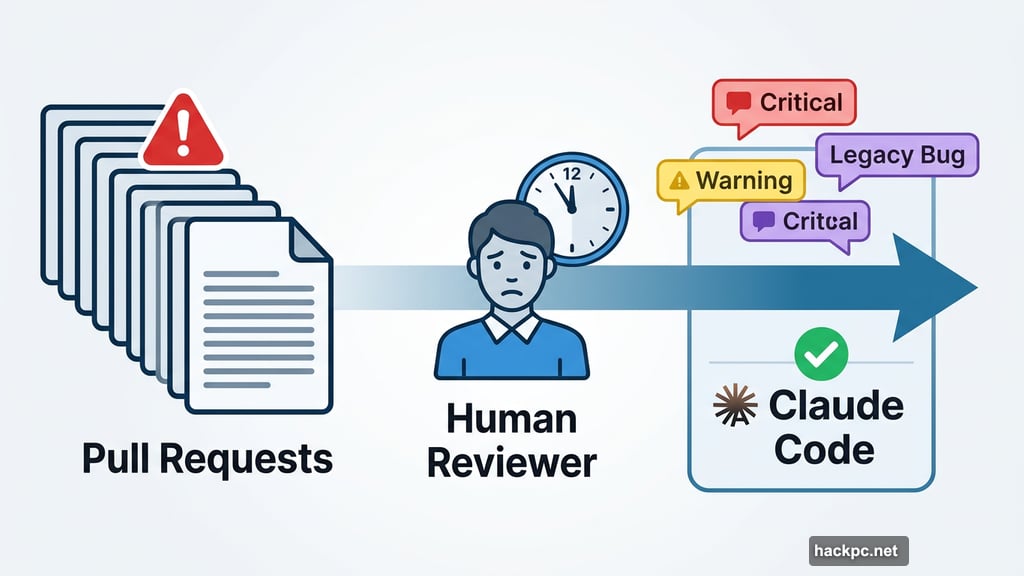

But speed comes with tradeoffs. More code means more pull requests. More pull requests mean more reviews. And human reviewers can only move so fast. So the very tool designed to speed things up has created a fresh bottleneck on the other side of the process.

Cat Wu, Anthropic’s head of product, explained the problem directly: “Claude Code has dramatically increased code output, which has increased pull request reviews that have caused a bottleneck to shipping code.”

Pull requests are how developers formally submit code changes for team review before anything goes live. When those stack up faster than reviewers can handle them, progress slows down in a frustrating way.

How Code Review Actually Works

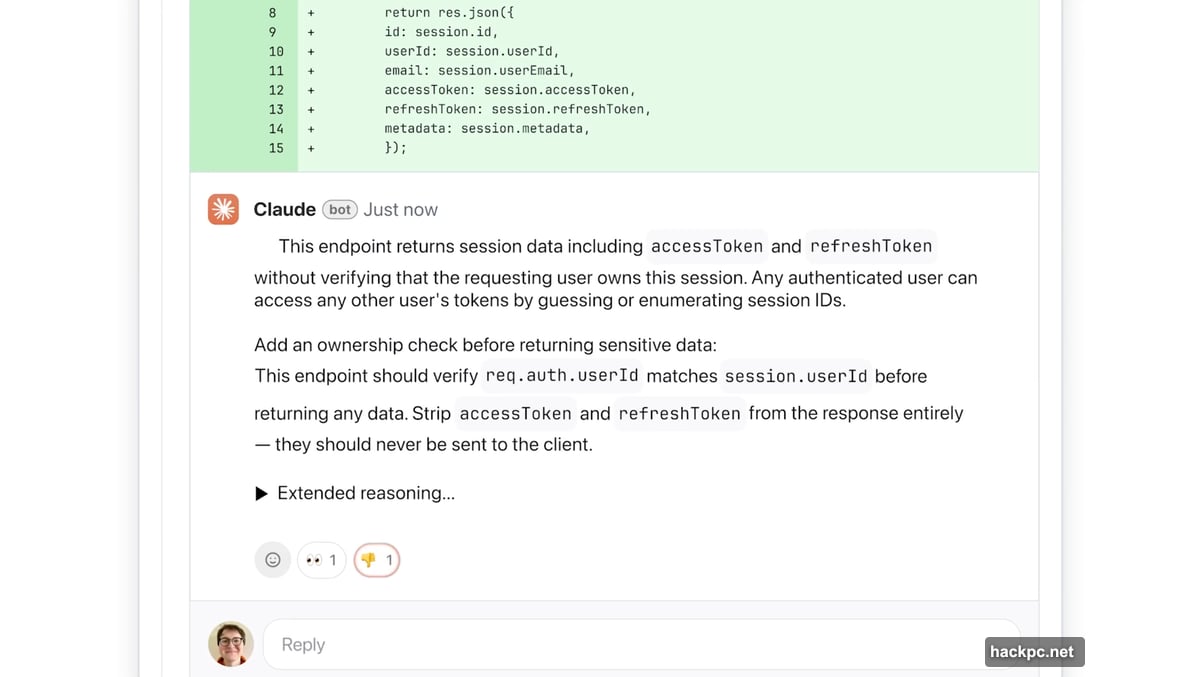

Once enabled, Code Review integrates with GitHub and runs automatically on every pull request. It reads through submitted code, flags potential issues, and leaves comments explaining what it found and how to fix it.

The tool deliberately focuses on logical errors rather than style. That’s a meaningful design choice. Anyone who has used automated code feedback tools before knows how annoying it gets when the suggestions are trivial or pedantic. Anthropic wanted to avoid that.

“We decided we’re going to focus purely on logic errors,” Wu said. “This way we’re catching the highest priority things to fix.”

The system color-codes its findings by severity. Red flags represent the most critical issues. Yellow highlights potential problems worth a second look. Purple marks issues tied to preexisting code or older bugs already in the system.

It also explains its reasoning at each step, walking through what the problem is, why it matters, and what a reasonable fix might look like. Engineering leads can also customize additional checks based on their team’s internal standards.

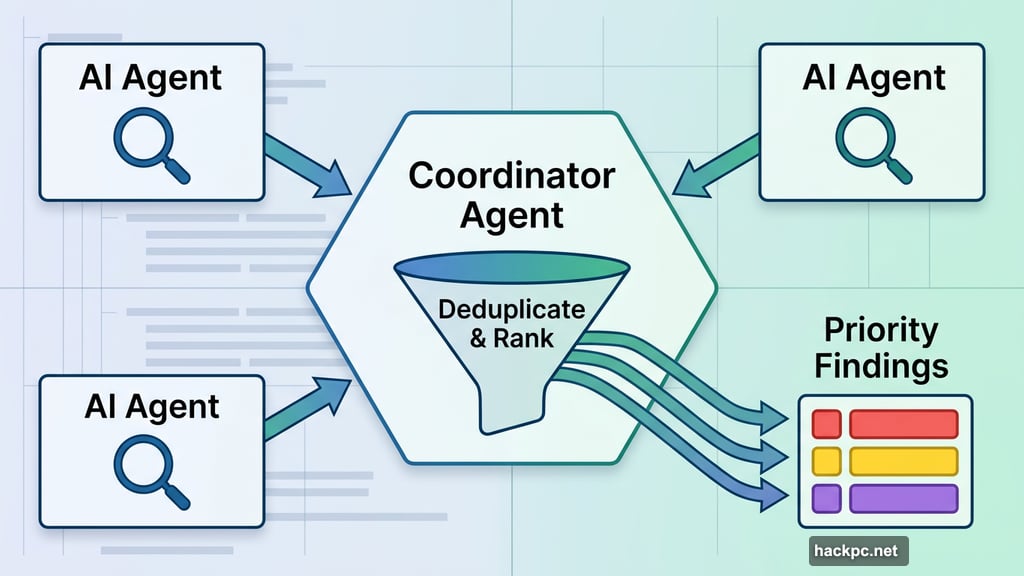

Multi-Agent Architecture Powers the Analysis

Behind the scenes, Code Review uses multiple AI agents working in parallel. Each agent examines the codebase from a different angle. Then a final agent pulls everything together, removes duplicates, and ranks findings by priority.

That approach means the tool can cover more ground quickly. But it also makes Code Review fairly resource-intensive by design.

Pricing follows a token-based model, which is standard for AI services. Wu estimated that each review costs somewhere between $15 and $25 on average, depending on code complexity. That’s not cheap, but Anthropic is positioning this as a premium product for enterprise customers who are already generating enormous volumes of AI-assisted code.

The tool also includes a light security analysis. For teams that need something deeper, Anthropic’s separately launched Claude Code Security goes further.

Who This Is Really Built For

Code Review is currently available in research preview for Claude for Teams and Claude for Enterprise customers. Anthropic specifically called out companies like Uber, Salesforce, and Accenture as the kind of organizations they had in mind when building it.

The enterprise focus makes sense given Anthropic’s current momentum. Claude Code’s run-rate revenue has already surpassed $2.5 billion since launch. Enterprise subscriptions have quadrupled since the start of 2026. Anthropic is also navigating legal pressure right now, having filed two lawsuits against the Department of Defense on the same day Code Review launched, following the agency’s designation of Anthropic as a supply chain risk.

In that context, doubling down on enterprise tooling looks like a smart strategic move.

Faster Code With Fewer Surprises

What makes Code Review interesting isn’t just the technology. It’s what it says about where AI-assisted development is heading.

AI tools reduced the friction of writing code. Now there’s a matching need to reduce the friction of reviewing it. The two sides of the development process need to stay in balance, or the whole pipeline jams up.

Wu put it plainly: “As engineers develop with Claude Code, they’re seeing the friction to creating a new feature decrease, and they’re seeing a much higher demand for code review.”

If this tool delivers on its promise, engineering teams could ship faster without accumulating the kind of hidden technical debt that comes back to bite you months later. That’s a genuinely useful outcome, and one that matters more as AI-generated code becomes a permanent part of how software gets built.

The question now is whether automated review can keep pace with automated creation. Early enterprise users will find out soon enough.

Comments (0)