There’s a quiet crisis happening inside creative higher education right now. Students who chose to study illustration, animation, or design because they love making things by hand are being told they need to understand the very technology threatening their careers.

And the tension on campuses has started boiling over.

Earlier this year, anti-AI flyers appeared around CalArts after posters seeking AI artists for a thesis project were altered with protest messages. At the University of Alaska Fairbanks, one film student made their feelings known in the most dramatic way possible — by physically eating another student’s allegedly AI-generated display piece in protest.

This isn’t just a debate about software. It’s a fight over what creative education is actually for.

Generative AI Crashed the Party Fast

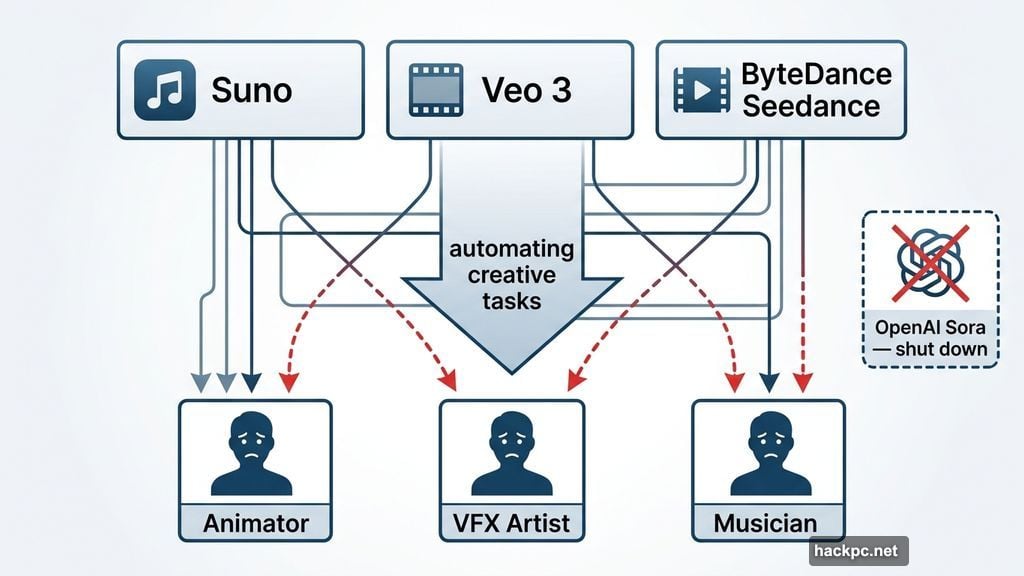

The speed of all this has been genuinely startling. Just a few years ago, text-to-image tools were novelty toys producing blurry messes. Now, tools like Midjourney and Google’s Nano Banana generate polished, stylized images from short text descriptions. Music generators like Suno and Udio pump out songs that sound close enough to popular human artists that they’re showing up on streaming platforms. Video models like Veo 3, ByteDance’s Seedance, and OpenAI’s Sora (which was quietly shut down last week) have animators and VFX artists genuinely worried about their futures.

Almost any creative task you can think of can now be assisted or fully completed with generative AI tools.

Meanwhile, social media is full of people making wild claims about how much of design and media can be automated without any real professional skills, every time a new model drops. AI providers like Adobe, OpenAI, and Google push back on that framing, insisting their tools are meant to help creatives rather than replace them. But the message from nearly every direction is the same: learn this technology, or risk getting left behind.

Sometimes that message comes directly from the art schools themselves.

Campuses Are Adapting Whether Students Like It or Not

The Massachusetts College of Art and Design, CalArts, London’s Royal College of Art, and many other creative institutions now actively encourage students across disciplines to engage with generative AI tools. This doesn’t mean traditional curricula are being thrown out. Students aren’t necessarily expected to use AI in their own work. But they are expected to understand how it works, what its limitations are, and what the ethical and legal implications look like.

“At CalArts, we aim to incorporate critical engagement with generative AI into our courses and programming to ensure our students can play an active role in shaping future technologies instead of simply reacting to them,” CalArts communications lead Robin Wander told The Verge.

The Pratt Institute put it even more plainly in a public statement. It acknowledged that many AI tools “mine and share/sell user data, are trained on biased datasets, and have significant impacts on the environment.” But it also noted that “fluency with AI tools is a growing competency sought by employers and an area of professional development across many industries.”

That tension — knowing AI has serious problems and teaching it anyway — is exactly what makes this moment so uncomfortable for creative educators.

Teaching AI as a Creative Tool, Not a Replacement

The approach most schools seem to be taking is careful. Rather than handing students a prompt box and calling it education, many instructors focus on using AI during the ideation phase — sketching out concepts and visualizing rough ideas early in a project — while keeping the actual creative work human.

Ry Fryar, assistant professor of art at York College of Pennsylvania, described the philosophy to The Observer this way. “The focus is on creativity itself, because without that, the results are common, therefore dull and fundamentally inexpert.” His courses teach students how to guide AI tools at a professional level while also understanding copyright law, ethics, and responsible use standards.

Some programs go further. CalArts launched the Chanel Center for Artists and Technology, which lists artificial intelligence and machine learning as core focus areas. Arizona State University announced a Spring 2026 class called “The Agentic Self,” taught by musician will.i.am, where students in the Games, Arts, Media, and Engineering school will build their own agentic AI systems — essentially personalized digital creative assistants. Will.i.am described the course as “a solution to AI replacing human jobs,” and ASU President Michael Crow said graduates “must be ready for the powerful shift in jobs toward AI.”

Student Sentiment Is Hard to Ignore

Not everyone is buying the optimistic framing. A study from the Ringling College of Art and Design conducted in late 2023 found that 70 percent of students felt “somewhat” or “extremely” negative toward AI. Most said outright they didn’t want it in their curriculum.

That’s a pretty significant number to brush aside. And it makes sense. Students pay serious money to develop skilled creative crafts. The prospect of becoming a prompt engineer — even a well-paid one — probably isn’t why most of them enrolled in art school.

There are also legitimate concerns about how generative AI models are trained. Many scrape protected creative works without consent and without compensating the original artists. For students who idolize working illustrators, animators, and photographers, knowing their eventual employers might use AI trained on those same artists’ stolen work is genuinely troubling.

The Argument for Teaching It Anyway

Wander at CalArts made the case that schools have a responsibility to help students engage directly with these tools precisely because the technology isn’t going away. “This is the best way to equip creative communities with the skills and knowledge to influence how these tools evolve and how they are used in creative work,” she said.

There’s real logic to that position. Creatives who understand AI deeply are better positioned to push back on its worst uses, advocate for ethical standards, and shape how the industry adopts these tools. Ignorance isn’t protection.

But that argument only holds up if schools are also honest about the risks. Teaching AI fluency alongside genuine critical thinking about its environmental costs, copyright issues, and labor implications is very different from simply pointing students toward the latest model and telling them to stay competitive.

The schools that get this balance right will probably produce graduates who are genuinely valuable. The ones that treat AI integration as a checkbox exercise might just be training their students to be undercut by the same tools they were forced to learn.

Watching my brother navigate all of this, I find myself hoping his school lands on the right side of that line. So far, I’m not entirely sure it has.

Comments (0)