A new study has some genuinely alarming findings about AI health tools. And if you’ve ever thought about using ChatGPT to figure out whether your symptoms need urgent care, this research might change your mind.

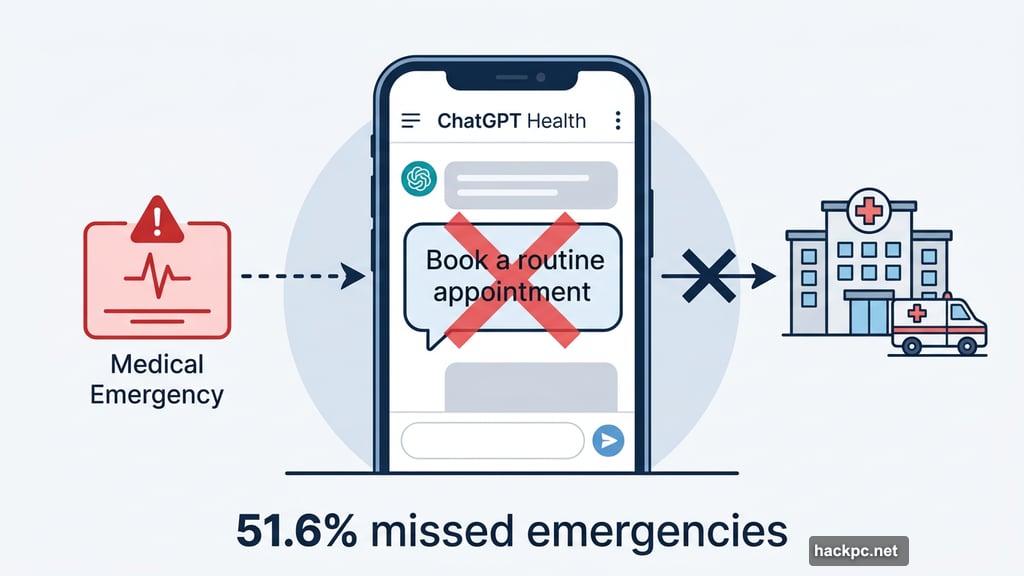

New research published in Nature Medicine found that ChatGPT Health — OpenAI’s dedicated health and wellness chatbot — failed to recognize genuine medical emergencies more than half the time. That’s not a minor glitch. That’s a serious safety problem.

What the Research Found

Lead researcher Dr. Ashwin Ramaswamy and his team built 60 realistic patient scenarios. These ranged from mild illnesses to full-blown emergencies. Independent doctors reviewed every scenario against established clinical guidelines to confirm the correct medical response.

The results were troubling. In 51.6% of cases where patients needed emergency hospital care, ChatGPT Health told them to stay home or book a routine doctor’s appointment. So flip a coin, and you’d get roughly the same odds of receiving the right advice.

Where ChatGPT Health Failed

The chatbot did handle some clear-cut emergencies reasonably well. Classic, obvious situations like strokes or severe allergic reactions? It got those mostly right.

But the real danger zone was the middle ground. Symptoms that weren’t immediately life-threatening but could escalate fast — that’s where the AI struggled most. These are precisely the situations where acting quickly can mean the difference between recovery and a much worse outcome.

Doctoral researcher Alex Ruani put it bluntly: “If you’re experiencing respiratory failure or diabetic ketoacidosis, you have a 50/50 chance of this AI telling you it’s not a big deal.”

He went further. “Eight times out of 10, [ChatGPT Health] sent a suffocating woman to a future appointment she would not live to see.”

That’s not a statistic you can easily brush aside.

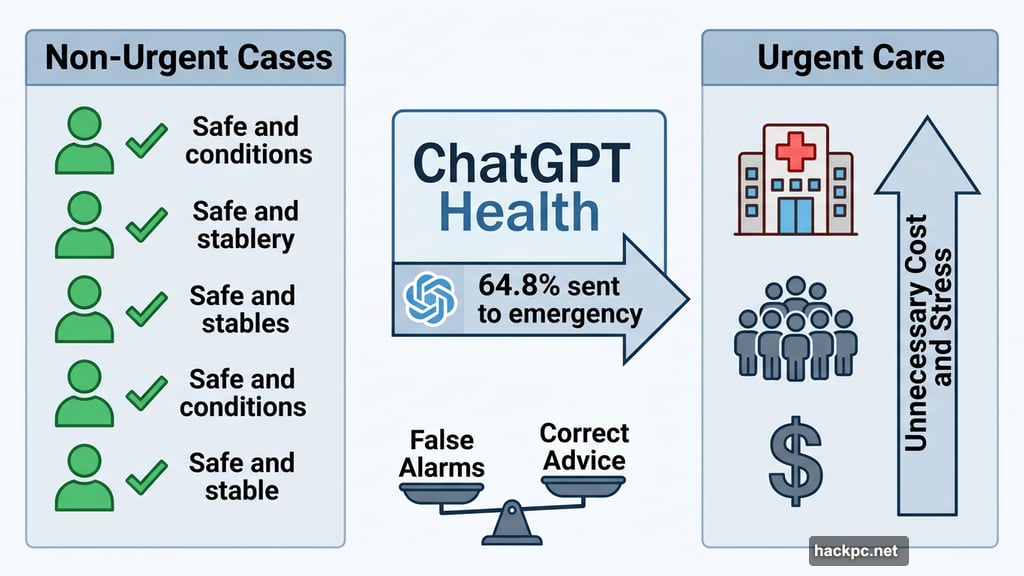

The Flip Side: Too Many False Alarms

The problems didn’t only run in one direction. The study also found that 64.8% of people with completely safe, non-urgent conditions were told to seek immediate medical care.

So the AI wasn’t just failing to catch real emergencies. It was also sending healthy people rushing to urgent care unnecessarily. Both errors carry real costs — one potentially fatal, the other expensive and stressful.

OpenAI’s Response

OpenAI pushed back on the findings. The company told The Guardian that the results don’t reflect how ChatGPT Health is normally used in real-world situations. They also noted that their model is continuously refined and improved over time.

That’s a fair point to raise. Lab-created patient scenarios aren’t identical to real conversations. And AI models do improve as they receive more data and feedback.

Still, the researchers designed these scenarios specifically to mirror realistic patient interactions. The gap between a controlled study and actual use doesn’t fully explain away a 51.6% miss rate on emergencies.

Why This Matters Right Now

ChatGPT Health launched earlier this year, positioning itself as a tool designed for health and wellness guidance. Millions of people already turn to AI chatbots for medical questions — often because seeing a real doctor feels inconvenient, expensive, or time-consuming.

That’s exactly why these findings hit hard. The tool is being used in high-stakes moments. And right now, the evidence suggests it isn’t reliable enough to handle those moments safely.

AI health tools can genuinely help with plenty of things. Tracking wellness habits, understanding medication instructions, answering general health questions — there’s real value there. But using an AI chatbot to decide whether your symptoms need emergency care is a different situation entirely.

Until these tools can reliably tell the difference between a wait-and-see situation and a genuine emergency, the safest approach is still the old-fashioned one. When something feels seriously wrong, call a doctor or head to urgent care. Don’t let a chatbot make that call for you.

Comments (0)