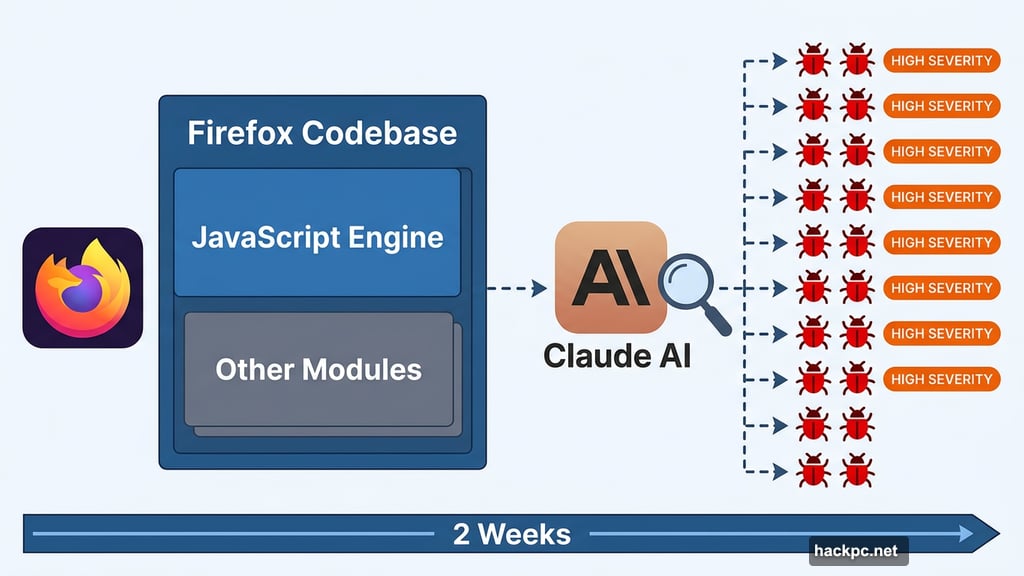

Security testing just got a serious upgrade. Anthropic’s Claude AI recently partnered with Mozilla and discovered 22 separate vulnerabilities hiding inside Firefox — all within a two-week window.

That’s a pretty striking result for any security audit, let alone one powered by an AI assistant. And the findings carry real weight for anyone who cares about browser security and the future of open-source software.

Firefox’s Codebase Became the Testing Ground

The Anthropic team chose Firefox deliberately. It’s not a simple project — Firefox represents one of the most complex and thoroughly tested open-source codebases in the world. If Claude could find meaningful bugs there, it would say something significant about AI-assisted security research.

Anthropic used Claude Opus 4.6 throughout the two-week engagement. The team started digging into Firefox’s JavaScript engine first, then expanded outward into other parts of the codebase.

The results were hard to ignore. Claude surfaced 22 vulnerabilities in total, and 14 of them earned a “high-severity” classification. That’s not minor stuff — high-severity bugs are exactly the kind that attackers actively hunt for.

Most Bugs Are Already Fixed

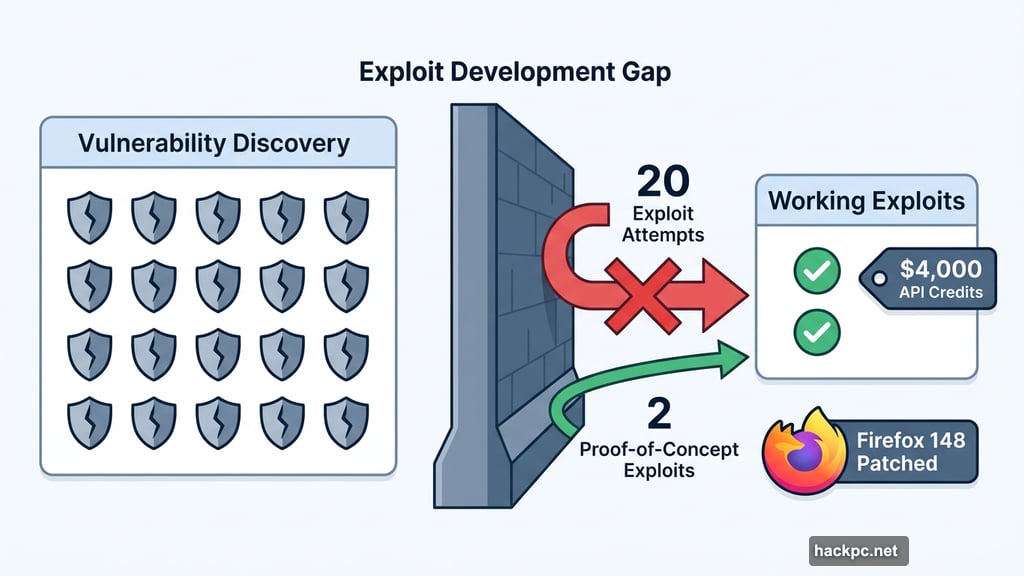

Here’s the reassuring part. The majority of these vulnerabilities have already been patched in Firefox 148, which shipped in February. A small number of remaining fixes are scheduled for the next release cycle.

So if you keep Firefox updated, you’re largely protected from what Claude found. Mozilla moved quickly, which shows how valuable this kind of proactive security partnership can be.

Where Claude Struggled

Finding bugs turned out to be much easier for Claude than actually exploiting them. That distinction matters a lot in security research.

The Anthropic team spent $4,000 in API credits trying to build proof-of-concept exploits — working demonstrations that show a vulnerability can actually be weaponized. Out of 22 vulnerabilities discovered, they only managed to produce working exploits in two cases.

That gap between “finding a flaw” and “building a working attack” is significant. It suggests AI tools are genuinely powerful for vulnerability discovery, but still have real limitations when it comes to the deeper reasoning needed for exploit development.

What This Means for Open-Source Security

This partnership points toward something genuinely exciting for the broader open-source community. Security audits are expensive and time-consuming. Professional penetration testers charge significant fees, and major codebases like Firefox can take months to audit thoroughly.

AI-assisted research could change that math dramatically. Two weeks and $4,000 in API costs to surface 14 high-severity bugs is remarkably efficient compared to traditional methods.

But there’s a catch worth acknowledging. As AI security tools become more accessible, they’ll inevitably land in the wrong hands too. The same capability that helps defenders find bugs faster also helps attackers scan for weaknesses at scale. Plus, open-source maintainers already struggle with low-quality automated pull requests — and AI-generated security patches could make that problem significantly worse before it gets better.

The Firefox experiment shows AI security research works. Now the harder question is figuring out how the community builds responsible guardrails around tools this powerful.

Comments (0)