You scrolled past it. Liked a few posts. Maybe shared a photo of your outfit or your living room. And somewhere along the way, Instagram or TikTok quietly turned that content into a shopping ad. No notification. No permission. No payment.

This isn’t a hypothetical future. It’s already happening to influencers with millions of followers. And if it’s happening to them, there’s a solid chance it’s happening to regular users like you, too.

A Million-Follower Influencer Lost Control of Her Own Posts

In late February, fashion influencer Julia Berolzheimer discovered something unsettling. A “Shop the look” button had appeared on her Instagram posts without her knowledge. When her followers clicked it, Instagram showed them lookalike products similar to whatever she was wearing.

The problem? She hadn’t put those links there. Instagram added them automatically using AI.

“My followers were being shown cheap knockoffs and random items from brands I’ve never heard of, attached to my image, under my name,” Berolzheimer wrote on Substack. She only found out because someone else told her.

For an influencer, this is a direct financial attack. Her entire business model relies on affiliate links that earn commission when followers buy products she recommends. Instagram’s feature bypassed those links entirely, redirecting buyers to competitor products while her name and face provided the trust-building sales pitch.

Meta Says It’s Just a Test. The Damage Is Real Anyway.

Meta spokesperson Matthew T Torres called it “a limited test intended to help people explore products that match their interests when they’re viewing posts or reels.” He added that Meta doesn’t take a commission on the items and plans to refine the feature based on feedback.

But the impact is already concrete. Berolzheimer’s followers were clicking links she never approved, buying products she never vetted, from brands she’d never heard of. Her credibility did the selling. She got nothing.

And Meta’s “limited test” framing doesn’t hold up under scrutiny. A feature that attaches shopping links to someone’s content without their consent isn’t a minor tweak. It’s a fundamental change to how creator content gets used.

TikTok’s Version Has Been Running Since Last Year

Here’s the thing: Instagram didn’t pioneer this approach. TikTok has been doing something almost identical for months.

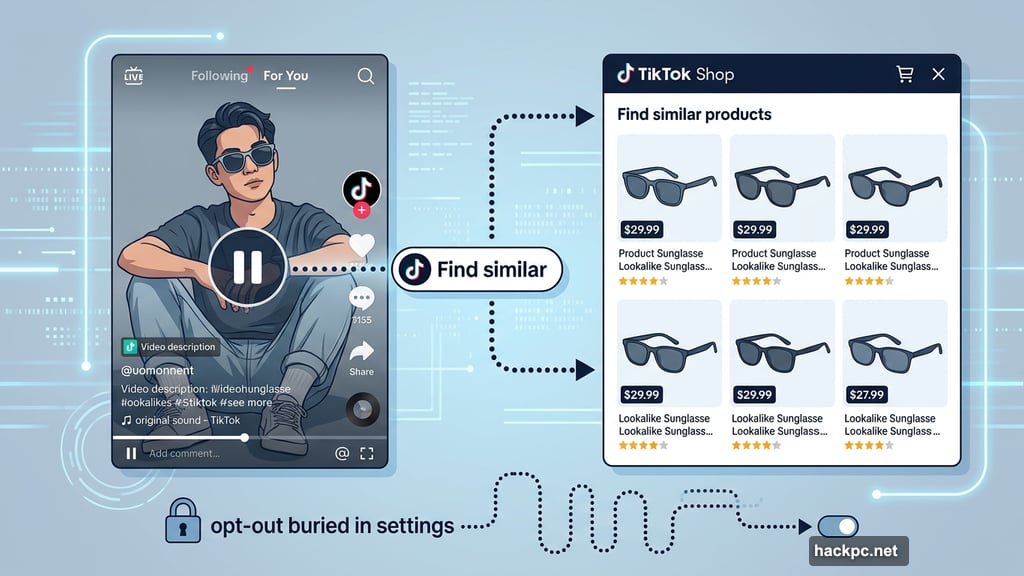

Back in September, TikTok rolled out a “Find similar” button that pops up when viewers pause a video. TikTok’s AI scans whatever appears in the frame, then recommends similar products available on TikTok Shop. Pause on someone’s sunglasses? Here are five cheap lookalikes. Watch a kids’ clothing video? Here’s a matching dress for sale.

Content creators had no idea the feature was being applied to their videos. The opt-out option was buried deep in settings. Most users never found it.

The feature also surfaced in deeply troubling contexts. Videos coming out of Gaza had TikTok Shop product recommendations attached to them, effectively turning footage of mass killings into retail promotions. TikTok said at the time it was working to fix the problem. But as of last week, the “Find similar” button still appears on clothing videos from accounts with as few as 400 followers.

This Isn’t Just an Influencer Problem

Social media commerce has always felt like influencer territory. Affiliate links, #partner disclosures, sponsored posts. That’s their world, not yours.

But the creator economy has quietly shifted. Micro-influencers and nano-influencers with tiny followings now hustle alongside the big names. Brands increasingly recruit ordinary people to make user-generated content, known as UGC, because it looks more authentic than polished ads. On platforms like Fiverr, rates for UGC creation start as low as $20.

The line between “content creator” and “regular person who posts photos” has blurred almost completely. And now the platforms are treating everyone the same way: as raw material for automated shopping recommendations.

Consider this: A reporter recently discovered that a photo of her and her husband was being used to sell picture frames. She’s not an influencer. She had no idea. The image was just useful.

That’s the new reality. Any public photo or video can become ad content. Your vacation post. Your outfit-of-the-day snap. Your apartment tour. If AI can identify products in the frame, a shopping link can follow.

The Creator Economy Promised Fame. It Delivered Exploitation Instead.

The early creator economy pitch was genuinely exciting. Anyone could build an audience, earn a living, and connect directly with people who cared about the same things. The algorithm would find you. Success was just a matter of consistency and creativity.

That promise turned out to be mostly myth. Building a real following took extraordinary luck, resources, and relentless labor. Most creators never broke through. The pandemic-era explosion of influencers beginning in 2020 flooded the market and diluted everyone’s earning potential.

Now the platforms are taking the creator economy’s central idea and flipping it. The premise was always “everyone can be an influencer.” Instagram and TikTok have just decided to skip the part where creators consent to it, profit from it, or even know it’s happening.

Instead, AI scans your content, identifies sellable products, attaches shopping links, and generates revenue for the platform. You contribute the image, the trust, and the audience. They keep the transaction.

Berolzheimer framed it clearly: Her face and name were being used to sell products she’d never approved. That’s not a side effect of testing a new feature. That’s the feature working as designed.

For anyone who posts photos on Instagram or TikTok, that’s worth pausing on. Your content isn’t just content anymore. It’s inventory.

Comments (0)