For 25 years, Wikipedia has run on a simple promise: anyone can contribute, as long as their information is accurate and verifiable. Now, the world’s most popular encyclopedia is putting its foot down on AI-generated content.

The rule is clear. You cannot use large language models (LLMs) to create or rewrite Wikipedia articles. Full stop.

But a few narrow exceptions do exist, and they’re worth knowing about.

What Wikipedia’s LLM Policy Actually Says

The platform’s official editing policy states that “text generated by large language models often violates several of Wikipedia’s core content policies.” That’s why using tools like ChatGPT or Google Gemini to generate or rewrite article content is now explicitly prohibited.

Wikipedia even names those two AI tools directly in a footnote, which sends a pretty clear message about how seriously the platform takes this.

It’s still unclear exactly when this policy went into effect. Wikipedia did not immediately respond to requests for comment.

Two Narrow Exceptions Editors Can Use

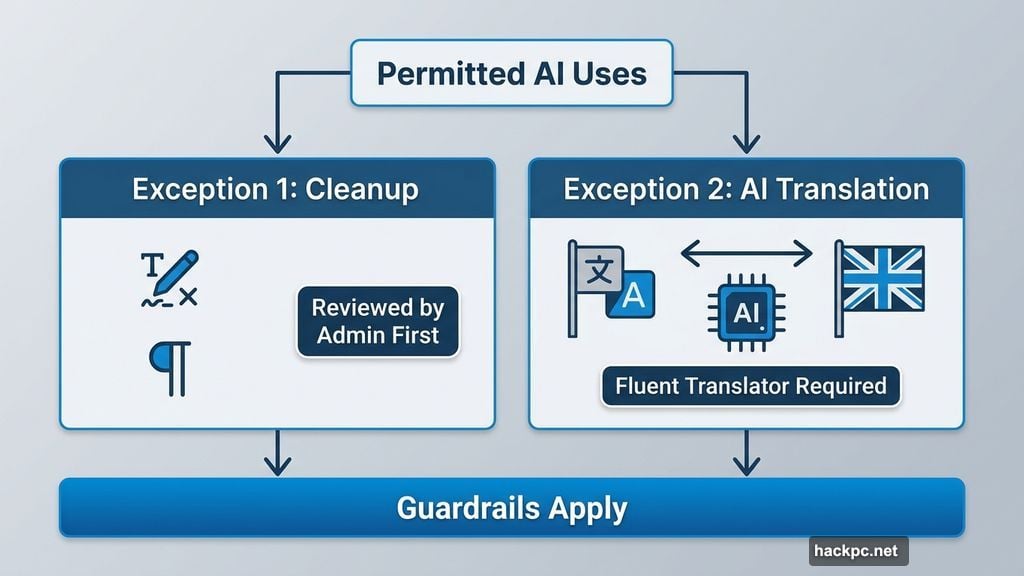

So what’s still allowed? Wikipedia does carve out two specific scenarios where AI use is permitted.

First, editors can use AI for basic cleanup tasks, like fixing typos or adjusting formatting. But there’s a catch. The article must already have been reviewed by a volunteer reviewer or administrator first. And even then, Wikipedia warns that AI can subtly change the meaning of content in ways that might not align with the original source’s intent.

Second, AI-assisted translation is allowed under certain conditions. If you’re translating an article from another language’s Wikipedia into English, AI tools can help. However, the translator must be fluent in both languages to verify accuracy. The translated content must still follow all standard Wikipedia editorial policies.

That’s it. Two narrow exceptions, both with significant guardrails.

Why Wikipedia Drew This Line

This move makes a lot of sense when you consider what Wikipedia actually is. It’s an open-source, community-driven project where accuracy and sourcing are everything. AI tools, no matter how capable, are prone to hallucinations, meaning they can confidently produce information that sounds plausible but is simply wrong.

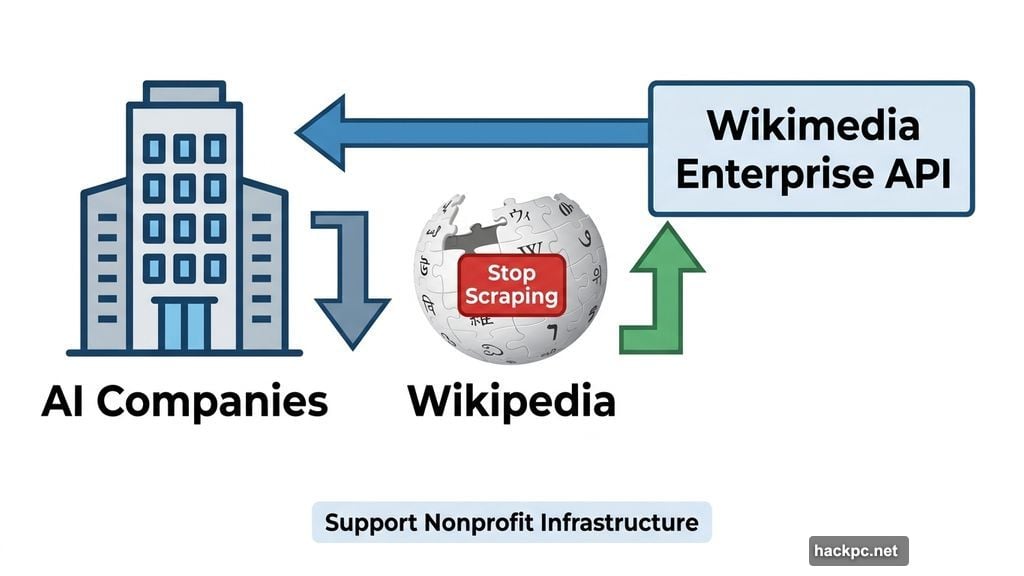

The Wikimedia Foundation has also been pushing back on AI companies more broadly. Last year, it asked AI companies to stop scraping data from Wikipedia’s servers and instead use its Enterprise API. The idea was to let companies access Wikipedia content at scale without hammering the nonprofit’s infrastructure, while also supporting its mission.

The current policy feels like a natural extension of that stance.

Enforcement Remains a Big Question Mark

Here’s where things get murky. Wikipedia’s policy doesn’t say much about how violations will actually be detected or what happens to editors who break the rules.

That’s a genuine challenge. Detecting AI-generated text is notoriously difficult, even with dedicated tools. And Wikipedia relies on a volunteer community of editors to keep things running. Enforcing a policy like this consistently across millions of articles and thousands of contributors is no small task.

So for now, the policy exists. The enforcement mechanism? Still a work in progress.

The Bigger Picture Behind the Ban

Wikipedia’s decision reflects a tension playing out across the entire internet right now. AI writing tools are faster and easier than ever. Apple Intelligence and Samsung’s Galaxy AI are already built into our phones. AI features are showing up in apps, websites, and services we use every single day.

But speed and convenience don’t automatically equal accuracy. And for a platform built entirely on verified, reliable knowledge, that trade-off doesn’t work.

Wikipedia is essentially saying that human judgment matters, especially when the stakes are getting information right. That’s not a small statement in 2025, and honestly, it’s hard to argue with the reasoning.

The broader question now is whether other platforms will follow suit, or whether Wikipedia ends up standing mostly alone in drawing this kind of hard line.

Comments (0)