Grammarly recently rolled out a feature called Expert Review. It promises writing feedback from famous thinkers, journalists, and authors. There’s just one problem. None of those experts are actually involved.

The feature launched in August 2025 as part of a broader set of AI-powered writing tools. It sits in Grammarly’s sidebar and offers revision suggestions “from the perspective” of well-known figures. Some are living writers. Some are long dead. And apparently, some are working journalists at places like The Verge, Wired, Bloomberg, and The New York Times.

So what exactly is going on here?

AI Personas, Not Real Feedback

Expert Review doesn’t connect users to actual human experts. Instead, Grammarly’s AI generates suggestions and frames them as if they came from recognizable names.

Wired first flagged that the feature mimics well-known authors without their involvement. Then The Verge noted that tech journalists from major publications were showing up too. Nobody asked these people. Nobody got their permission.

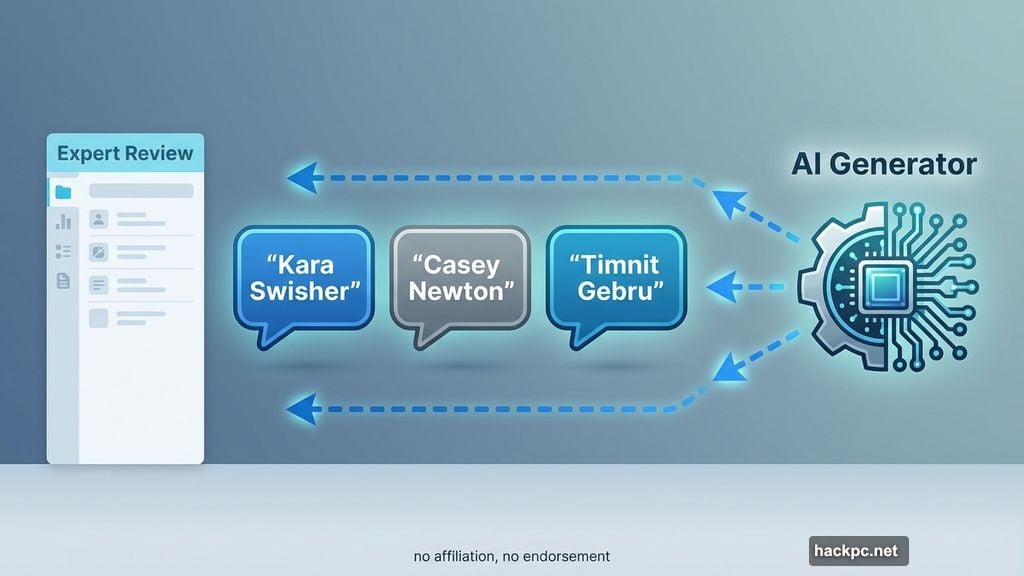

TechCrunch’s Anthony Ha tested this firsthand. He dropped a draft of his article into Grammarly to see what would happen. The feedback suggested he “add ethical context like Casey Newton,” “leverage the anecdote for reader alignment” like Kara Swisher, and “pose the bigger accountability question” like Timnit Gebru.

None of those people wrote that feedback. An AI did. It just borrowed their names to make it sound more credible.

Grammarly’s Defense Doesn’t Quite Hold Up

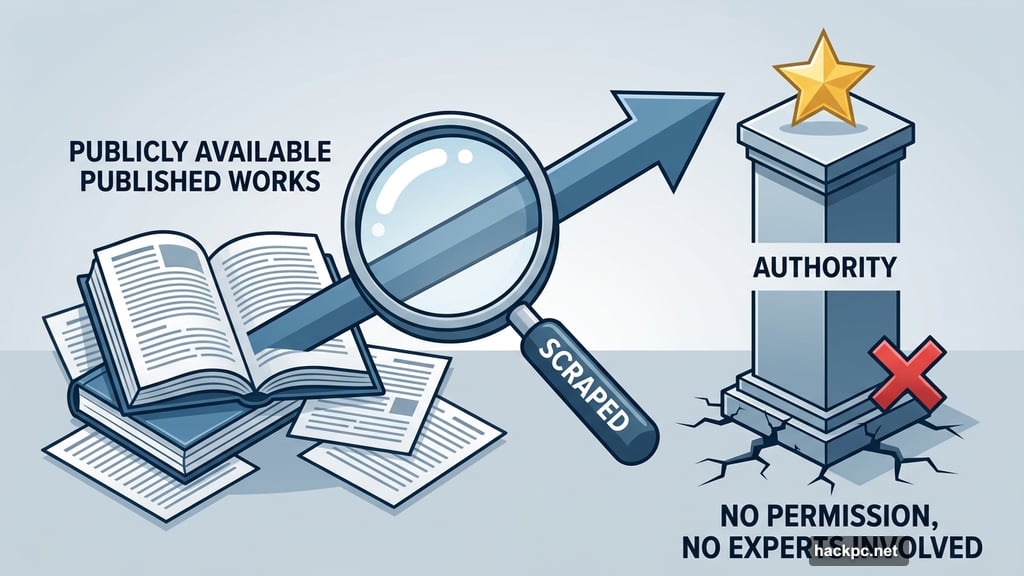

When The Verge asked Grammarly directly, Alex Gay, vice president of product and corporate marketing at Grammarly’s parent company Superhuman, explained that these figures are mentioned “because their published works are publicly available and widely cited.”

Grammarly’s own user guide is a bit more cautious. It states that “references to experts in Expert Review are for informational purposes only and do not indicate any affiliation with Grammarly or endorsement by those individuals or entities.”

That disclaimer is clear enough on paper. But it doesn’t change what users actually experience. When a sidebar tells you to revise your paragraph “like Kara Swisher would,” that sure feels like an endorsement. The framing does a lot of work that the fine print quietly walks back.

What Historians and Critics Are Saying

The backlash wasn’t just from journalists protecting their bylines. Academics and subject matter experts pushed back too.

Historian C.E. Aubin put it plainly to Wired: “These are not expert reviews, because there are no ‘experts’ involved in producing them.”

That’s the core issue. Expert Review borrows credibility it didn’t earn. The AI generates suggestions based on publicly available writing, then attaches a famous name to make those suggestions feel more authoritative. But authority isn’t a style you can copy-paste.

There’s a real difference between “write more clearly” and “write more clearly like this specific journalist whose work you admire.” The second version feels personal and meaningful. But if that journalist has no idea their name is being used, that feeling is manufactured.

Why This Matters Beyond the Naming Problem

This isn’t just a quirky product decision. It touches something bigger in the AI writing tools space.

Tools like Grammarly have enormous reach. Millions of people use them daily for emails, essays, reports, and articles. When those tools suggest that real, named individuals are guiding your revisions, users reasonably trust that framing. Most won’t read the disclaimer buried in a user guide.

The actual journalists and thinkers named here built their reputations over careers. Using those names to add polish to AI-generated suggestions, without permission and without involvement, feels like borrowing someone’s credibility without asking.

Plus, it sets a strange precedent. If AI tools can freely attach famous names to automated feedback, where does that stop? Today it’s writing style suggestions. Tomorrow it could be something that matters a lot more.

Grammarly may be technically covered by its disclaimers. But technically covered and genuinely transparent aren’t the same thing. Users deserve to know exactly what they’re getting when a tool promises “expert” feedback. Right now, the word expert is doing a lot of heavy lifting for a feature that has no actual experts in it.

Comments (0)