Deepfakes of real people flood YouTube every day. And until now, most of the tools fighting back were reserved for popular content creators. That’s about to change.

Starting this week, YouTube is expanding its likeness detection feature to a pilot group of journalists, government officials, and political candidates. The move signals a growing recognition that AI-generated impersonations aren’t just a celebrity problem anymore.

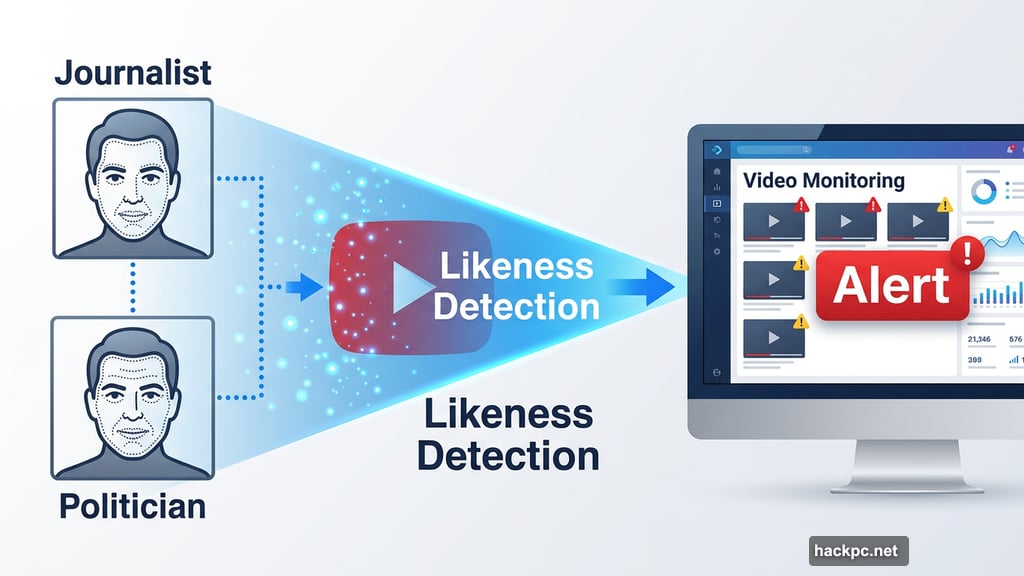

What Likeness Detection Actually Does

Think of this tool as a face-focused version of YouTube’s Content ID system. Instead of scanning for copyrighted music or video, it scans for people’s faces.

When the system finds a match, the person enrolled in the program receives an alert. From there, they can request YouTube remove the content. But here’s the catch: not every removal request gets approved.

YouTube bases its removal decisions on its existing privacy policy. That policy includes carve-outs for parody, satire, and political commentary. So if a clearly labeled satirical video features a politician, it will likely stay up.

“YouTube has a long history of protecting free expression, and that includes parody, satire, and political critique,” said Leslie Miller, YouTube’s vice president of government affairs and public policy. “We evaluate every removal request under our longstanding privacy guidelines to ensure we’re not stifling the very civic discourse we’re trying to protect.”

Signing Up Requires Real Identity Verification

To join the program, participants need to submit two things: a video of themselves and a government-issued ID. YouTube says this data stays strictly within the likeness detection feature and won’t be used for anything else.

Individuals can also withdraw from the program at any time and request that YouTube delete their submitted data. That’s a meaningful privacy safeguard, given how sensitive facial recognition data can be.

Creators Already Using It Are Mostly Watching, Not Requesting Removals

The likeness detection feature has been available to millions of content creators for a while now. So what are they actually doing with it?

Amjad Hanif, YouTube’s vice president of creator products, says the volume of removal requests is surprisingly low. Most creators, it turns out, are using the tool more like a monitoring dashboard than an enforcement mechanism.

“They may see lots of matches, and I think for a lot of them, it’s just been the awareness of what’s been created,” Hanif said. “But the volume of actual removal requests is really, really low because most of it turns out to be fairly benign or additive to their overall business.”

Politicians and journalists, however, might feel very differently about AI content mimicking their faces and voices. A deepfake of a creator hawking supplements is awkward. A deepfake of a senator endorsing a candidate is something else entirely.

Could Deepfakes Eventually Be Monetized?

Here’s a wrinkle worth paying attention to. Hanif hinted that YouTube is exploring the possibility of letting people monetize AI-generated deepfake content in the future.

“You may find that folks in the industry want to allow that, and that’s something that we’re investing in and we have a long history and experience in,” he said.

That’s a deliberately vague statement, but it suggests the platform is thinking about deepfakes not just as a content moderation problem, but also as a potential revenue stream. How that plays out for journalists and politicians who might object to any commercial use of their likeness remains to be seen.

The Bigger Picture: Deepfakes Aren’t Slowing Down

YouTube has been wrestling with AI-generated content for years. The first major wave hit with AI music that cloned real artists’ voices. Then came a flood of AI slop channels, which YouTube recently started removing under its spam policies. Fake AI movie trailers also got flagged and penalized.

But on the creator side, YouTube is simultaneously pushing AI tools hard. The platform has launched a string of AI-powered features that help creators plan, script, and optimize their videos. So the platform is fighting AI misuse with one hand while encouraging AI creation with the other.

Meanwhile, a recent New York Times investigation found that children are being fed low-quality AI videos that falsely claim to be educational. That’s a reminder that deepfake-style AI content harms extend well beyond political disinformation.

Who Gets Protected and Who Doesn’t

Right now, this expanded protection covers journalists, government officials, and political candidates. Previously it covered popular content creators. Taken together, that’s still a relatively small slice of humanity.

Hanif acknowledged that extending likeness detection to everyone on the platform is “probably not” on the near-term roadmap. For ordinary people who find AI deepfakes of themselves on YouTube, a manual complaint process still exists, but it lacks the proactive alerting and monitoring that this tool provides.

That gap is worth sitting with. Deepfakes of private individuals cause real harm too — harassment, reputation damage, financial scams. The people most vulnerable to those harms are often the ones least likely to have institutional tools protecting them.

YouTube’s expansion of likeness detection is genuinely a step forward. But it’s still a feature designed for people who are already relatively powerful. The harder problem, protecting everyone else, remains largely unsolved.

Comments (0)