Celebrity deepfakes have become a real problem. Scam ads featuring fake versions of famous faces flood social media daily, and until recently, the people being impersonated had very little recourse.

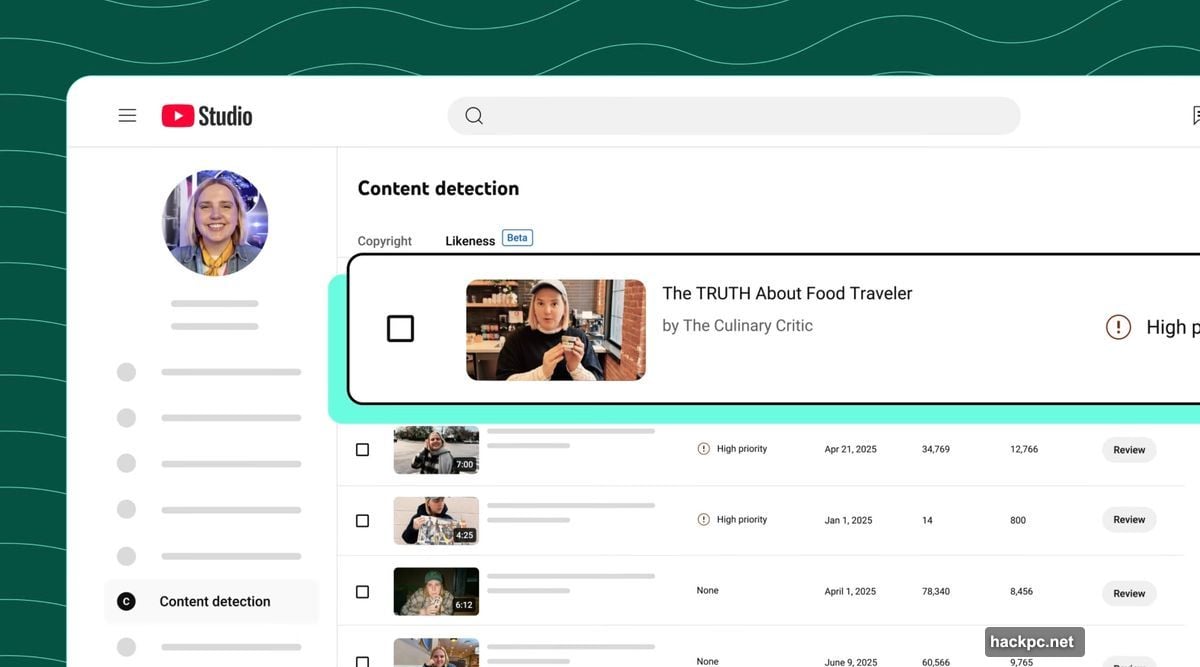

YouTube just made a significant move to fix that. The platform is rolling out its “likeness detection” technology to the entertainment industry, giving celebrities, talent agencies, and management companies a real tool to fight back against unauthorized AI-generated content.

Content ID Gets a Facelift for the Deepfake Age

If you’ve ever uploaded a video to YouTube and had a song flagged automatically, you’ve already met Content ID. That system scans uploaded videos for copyright-protected audio and visuals, then lets rights owners decide what happens next.

Likeness detection works the same way. But instead of hunting for a song or movie clip, it scans for someone’s face.

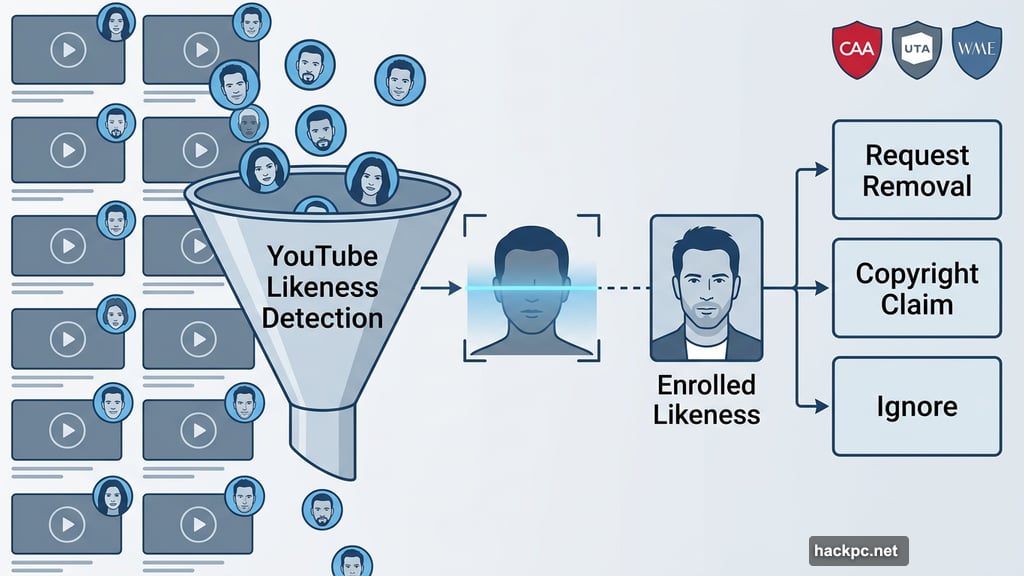

Once enrolled, the system searches YouTube for AI-generated content that visually matches a participant’s likeness. When a match shows up, the enrolled person gets options. They can request removal for privacy policy violations, submit a formal copyright claim, or simply ignore it.

Worth noting: YouTube won’t pull everything flagged. Parody and satire still get protection under platform rules, so a comedy sketch poking fun at a celebrity stays up.

Who Gets Access Now

YouTube first tested likeness detection in a pilot program last year with a small group of creators. Then this spring, it expanded to cover politicians, government officials, and journalists.

Now the entertainment world joins that list. Talent agencies CAA, UTA, WME, and Untitled Management all provided feedback during development. That kind of industry involvement matters because these agencies represent thousands of clients whose faces could easily turn up in AI-generated scam content.

Here’s a detail that makes the tool especially practical: entertainers don’t need their own YouTube channels to enroll. The system just needs a face to scan for. So a celebrity who has never posted a YouTube video can still get protection from deepfakes appearing on the platform.

Audio Protection Is Coming Too

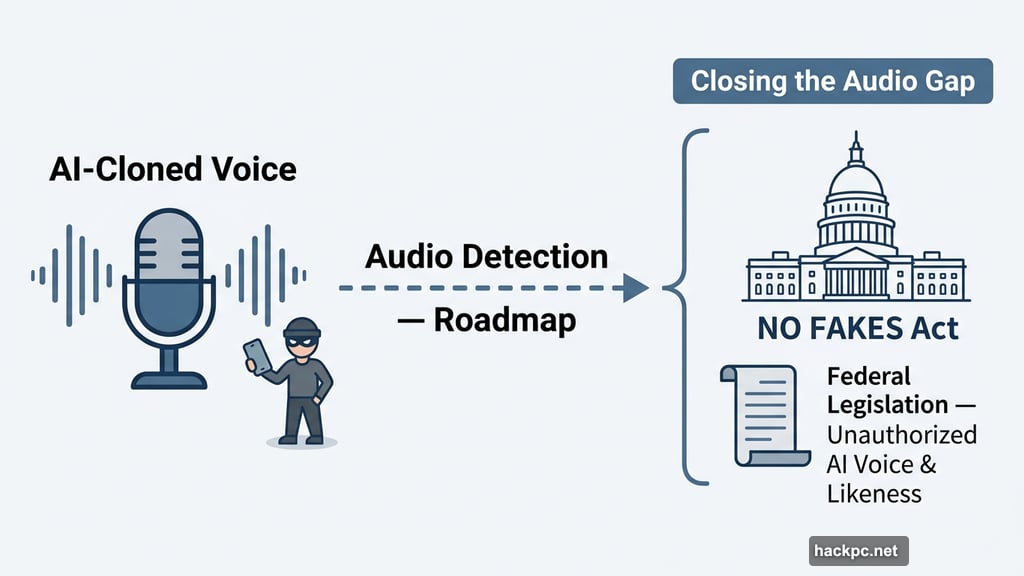

Right now, likeness detection focuses entirely on visual matches. But YouTube says audio detection is on the roadmap, which would extend the same protections to AI-cloned voices.

That’s a bigger deal than it might sound. Voice cloning technology has gotten scarily good. Scammers already use it to impersonate celebrities in phone calls and audio clips. Extending these protections to audio would close a significant gap.

The Federal Push Running Alongside This

YouTube isn’t just building tools internally. The company is also pushing for federal legislation through its support of the NO FAKES Act in Washington, D.C. That bill would regulate the use of AI to create unauthorized recreations of a person’s voice and visual likeness.

The combination makes sense. Platform-level detection handles takedowns quickly. Federal law creates real consequences for bad actors. Together, they form a more complete defense.

How Many Deepfakes Have Been Removed?

YouTube acknowledged in March that the number of removals handled by the tool so far is still “very small.” That’s honest, and probably expected at this stage. The technology is new, enrollment is still growing, and the entertainment industry expansion only just launched.

The more interesting number will come six to twelve months from now, when agencies have fully rolled out enrollment across their client rosters and the system has had time to scale.

For celebrities who’ve spent years watching fake versions of themselves pitch cryptocurrency scams and weight loss supplements to millions of viewers, even a small number of removals is progress worth paying attention to. The technology exists. The industry support is there. Now it’s about whether the scale can match the scope of the problem.

Comments (0)