Deepfake videos of famous people are everywhere on YouTube. Now, those famous people finally have a real tool to fight back.

YouTube just expanded its AI-powered deepfake detection tool to a much wider audience. Actors, athletes, musicians, and other public figures can now use it to find and flag unauthorized AI-generated videos that copy their likenesses. This is the broadest rollout yet for a tool the platform has been quietly building for two years.

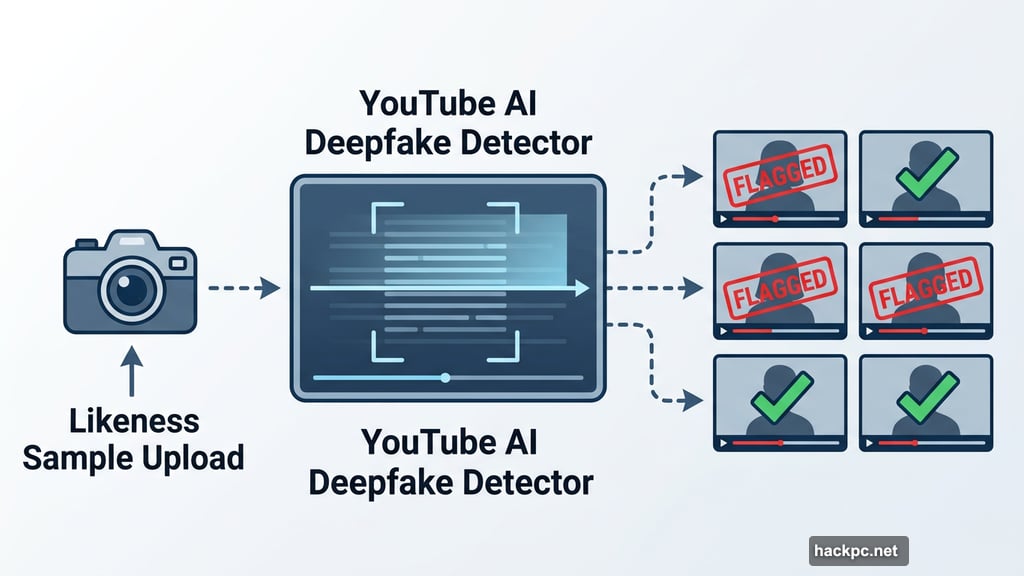

How the Deepfake Detection Tool Works

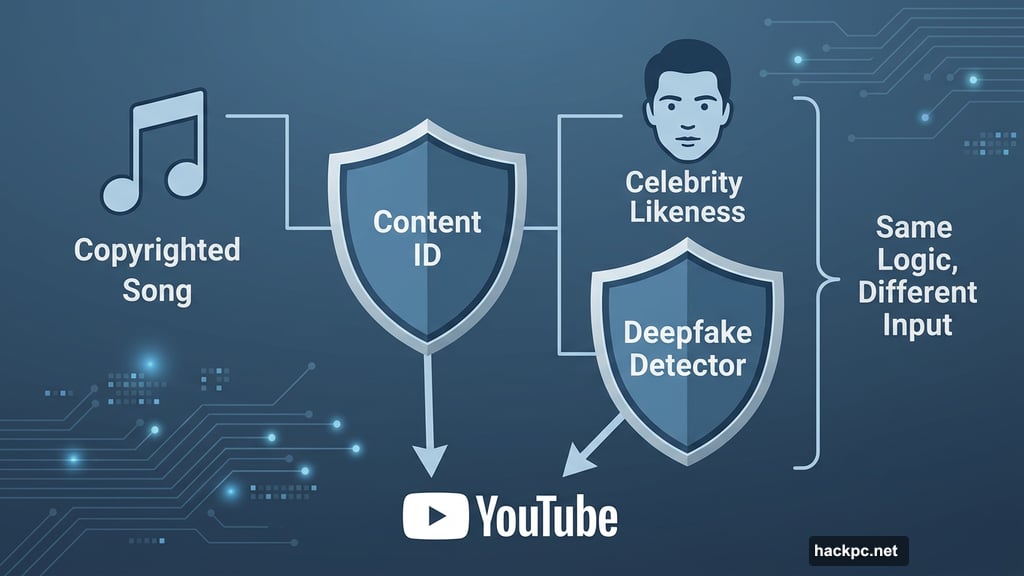

Think of it like YouTube’s Content ID system, but for faces instead of music.

Content ID has scanned uploaded videos for copyrighted songs for years. It automatically flags matches and notifies rights holders. The new deepfake detector works the same way, but it scans for people instead of songs.

To join the program, a celebrity or their representative uploads a likeness sample to the tool. From there, YouTube’s system continuously scans new uploads and flags anything that looks like an AI-generated copy. Plus, affected individuals don’t even need a YouTube account to participate or request a takedown.

That last part matters. Many celebrities and their legal teams aren’t active YouTube users. So removing that barrier makes the process much more accessible.

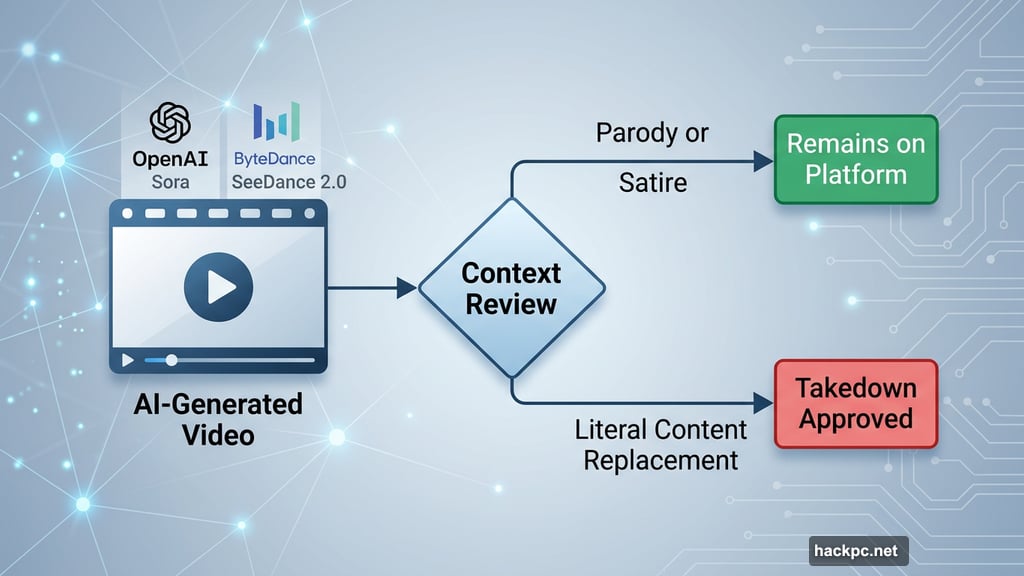

Not Every Flagged Video Gets Removed

Here’s where things get a bit nuanced. Flagging a video doesn’t automatically delete it.

YouTube’s chief business officer Mary Ellen Coe made that clear to The Hollywood Reporter. “There are a number of cases, like parody and satire, where our community guidelines would allow that to remain on the platform,” she said.

So context still matters. A satirical sketch using AI to put a celebrity’s face in an absurd situation may survive a takedown request. But a video that essentially replaces a celebrity’s actual work with an AI clone? That’s a different story.

“If someone is doing an exact replica of something that would limit the livelihood of a celebrity, an actor or a creator, because it’s literal content replacement, that would be included in a takedown,” Coe explained.

Celebrity Deepfakes Have Been a Growing Problem

This rollout didn’t happen in a vacuum. The entertainment industry has been fighting AI-generated likeness abuse for a while now.

Hollywood studios and actors have pushed back hard against major AI video generators. Platforms like OpenAI’s Sora and ByteDance’s SeeDance 2.0 have faced serious pressure from celebrities and major studios. Still, deepfakes keep spreading across video platforms at a pace that’s hard to contain manually.

YouTube’s tool aims to tackle the problem at scale, using automation to catch what human reviewers would miss.

Who Already Used This Tool

YouTube didn’t launch this to the general celebrity pool overnight. The rollout happened in stages.

The platform first tested it with some of its biggest creators, gathering real-world feedback during a pilot program. A few months ago, politicians became the next group to gain access. Now entertainers make up the latest and largest wave of new users.

During the pilot, YouTube executives noted that creators removed only a small portion of flagged content. Most takedown requests focused on negative or disparaging videos rather than neutral or fan-made content.

What Comes Next for AI Likeness Rights

Coe also dropped an interesting hint about where this could eventually lead.

She suggested that rightsholders might one day choose to monetize AI-generated content featuring their likenesses rather than simply taking it down. Imagine a celebrity licensing their AI likeness for fan videos in exchange for a revenue share. That model doesn’t exist on YouTube yet, but the door seems open.

For now, though, YouTube’s focus is squarely on protection. Coe described the current priority as building a “foundational layer of responsibility and protection” for public figures whose likenesses get copied without consent.

That foundation feels overdue. AI video generation tools have made it cheaper and faster than ever to fabricate convincing content featuring real people. YouTube covering this at scale is a meaningful step, even if it won’t catch everything.

The real test will be whether the system keeps pace with how quickly AI generation tools keep improving. For now, celebrities finally have something concrete to push back with.

Comments (0)